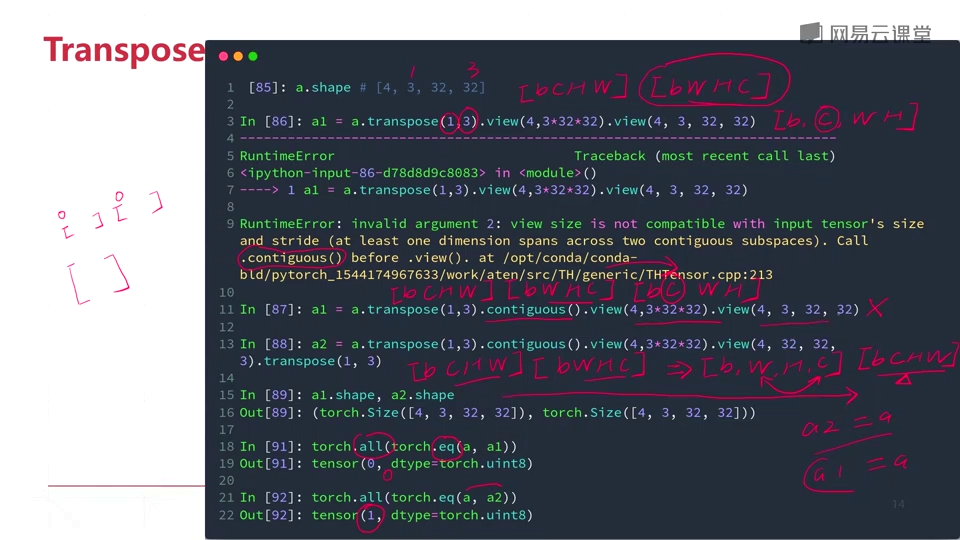

To visualize the layout, given a contiguous array x = torch.arange(12). import cv2 import numpy as np import torch import torchvision.utils as vutils reading image rgbimg cv2.imread 1. I am giving two methods to save your image. With the k-th stride being the product of all dimensions that come after the k-th axis, e.g., y.stride(0) = y.size(1) * y.size(2), y.stride(1) = y.size(0), y.stride(2) = 1. You forgot to think about the permutation of the axes. By calling contiguous() on the transposed tensor, we create a new tensor with a contiguous memory layout.> y = torch. Compared to torch.permute() for reordering axes which needs positions of. We can also permute a tensor with new dimension using Tensor.permute (). For example, a tensor with dimension 2, 3 can be permuted to 3, 2. It doesn't make a copy of the original tensor. It returns a view of the input tensor with its dimension permuted. In this example, we first create a tensor, then transpose it to obtain a new tensor with a non-contiguous memory layout. For PyTorch, code changes are needed to support a GPU (unlike TensorFlow which. torch.permute () method is used to perform a permute operation on a PyTorch tensor. Print(contiguous_tensor.is_contiguous()) # Output: True # Check if the contiguous tensor is contiguous As we see the output contains a list of dictionaries. We will first have a look at output of the model. We will see how to use it with torchvision’s KeypointRCNN loaded with keypointrcnnresnet50fpn (). # Create a contiguous version of the transposed tensorĬontiguous_tensor = transposed_ntiguous() The drawkeypoints () function can be used to draw keypoints on images. Print(transposed_tensor.is_contiguous()) # Output: False encoded layers has size of 32,2 that is you have only 2 dimensions but in permute you are using 3 dimensions. # Check if the transposed tensor is contiguous # Transpose the tensor to create a new tensor with a non-contiguous memory layout This function is equivalent to NumPy’s moveaxis function. where a is a 3D PyTorch tensor, and the code permute a’s dimensions such that the inner most dimension (2) is changed to the outer most (0). The terminology is taken from numpy : Alias for torch.movedim (). By calling contiguous() on the tensor, you can obtain a tensor with a contiguous memory layout, which can improve performance in some cases. Yes, this functionality can be achieved with permute, but moving one axis while keeping the relative positions of all others is a common enough use-case to warrant its own syntactic sugar. This can negatively affect the performance of certain tensor operations. When you perform operations like slicing, indexing, or transposing on a tensor, the resulting tensor might have a non-contiguous memory layout. In other words, it ensures that the elements of the new tensor are stored in a continuous block of memory, allowing for efficient memory access and operations. In PyTorch, the contiguous() function is used to create a new tensor that has the same data as the input tensor but with a contiguous memory layout. Why is contiguous() used in conjunction with permute()? Parameters: input ( Tensor) the input tensor.

The first dimension (with size 2) is moved to the last position, the second dimension (with size 3) is moved to the first position, and the third dimension (with size 4) is moved to the second position. torch.permute torch.permute(input, dims) Tensor Returns a view of the original tensor input with its dimensions permuted. In this example, the original tensor has shape (2, 3, 4), and we use permute() to reorder the dimensions, resulting in a new tensor with shape (3, 4, 2). Permuted_tensor = tensor.permute(1, 2, 0)

forward(x: Tensor) Tensor source Defines the computation performed at every call. Parameters: dims ( Listint) The desired ordering of dimensions. # Permute the dimensions to get a new tensor of shape (3, 4, 2) class (dims: Listint) source This module returns a view of the tensor input with its dimensions permuted. The length of the sequence should match the number of dimensions in the input tensor. The function takes a sequence of integers as arguments, representing the new order of the dimensions. This can be useful in various deep learning scenarios, such as when you need to change the dimension order of your input data to match the expected input format of a model. In PyTorch to switch from one to another format to another we use permute. In PyTorch, the permute() function is used to rearrange the dimensions of a tensor according to a specified order. Explains the connection PyTorch tensor has to numpy array, explains how to.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed